Why 87% of AI Initiatives Fail: The Human-Centered Solution Leaders Miss

- saafir.jenkins

- Dec 24, 2025

- 5 min read

The statistics are sobering: despite billions invested in artificial intelligence, 87% of AI initiatives never reach production. Even more concerning? The companies experiencing these failures aren't addressing the real culprit. While executives pour resources into cutting-edge algorithms and sophisticated models, they're systematically ignoring the human-centered fundamentals that determine success or failure.

The uncomfortable truth is that AI failure isn't a technology problem: it's an organizational discipline problem. And until leaders recognize this distinction, they'll continue watching expensive AI initiatives collapse under the weight of poor data hygiene, broken processes, and organizational dysfunction.

The Real Reason AI Initiatives Collapse

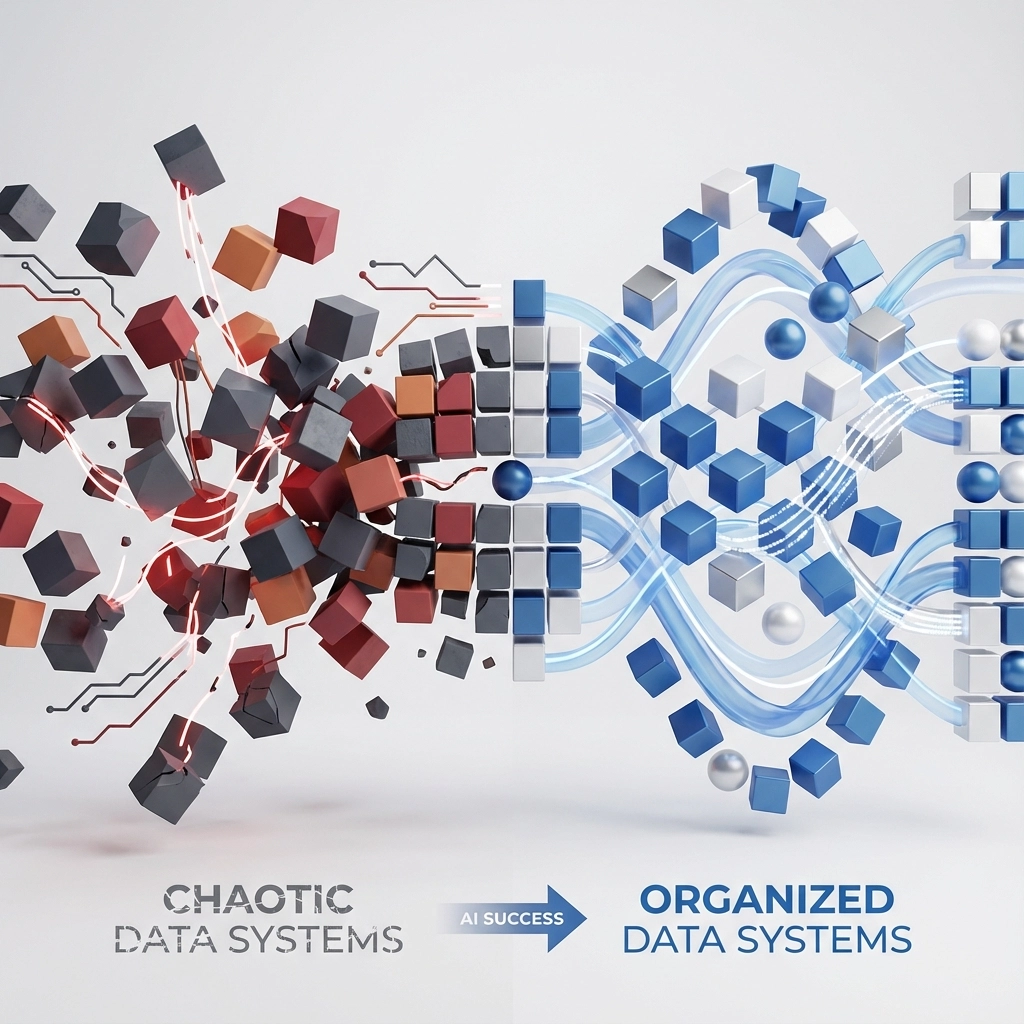

Here's the critical insight most leaders miss: AI amplifies what you already are. If your data is clean and your processes work efficiently, AI transforms you into an unstoppable competitive force. If your organizational fundamentals are broken, AI simply helps you fail faster and more expensively.

This amplification effect explains why only 15% of companies succeed with AI while the vast majority struggle. The successful organizations aren't doing anything magical with their technology choices. They're doing the unglamorous work of fixing their foundational problems before layering AI on top.

Consider this: if your CRM system is a disorganized "junk drawer" of inconsistent data, what happens when you feed that chaos into an AI system? You get sophisticated algorithms producing garbage outputs at lightning speed. The AI didn't fail: your organizational discipline failed.

The Three Critical Failure Points

Research reveals that AI initiative failures cluster around three interconnected areas: science, decisions, and people. Each represents a different aspect of organizational readiness that leaders consistently underestimate.

Data Quality: The $12.9 Million Problem

Poor data quality emerges as the primary culprit in AI project failures, yet organizations continue neglecting data hygiene while investing heavily in advanced algorithms. It's like fueling a Ferrari with contaminated gasoline: the engine's sophistication becomes irrelevant when the inputs are compromised.

The IBM Watson Health case study perfectly illustrates this dynamic. Watson's cancer diagnosis recommendations failed not because of algorithmic limitations, but because different hospitals used varying formats, terminologies, and recording methods for patient data. The AI system couldn't overcome the fundamental inconsistency in its training data.

Gartner estimates that poor data quality costs organizations an average of $12.9 million annually through direct losses, missed opportunities, and remediation efforts. Yet most leadership teams allocate budget for AI tools while under-investing in the data infrastructure that makes those tools effective.

Organizational Process Dysfunction

The second failure point lies in organizational processes. Companies with poor track records in digital transformation: only 12% succeed according to industry research: attempt to overlay AI onto their existing dysfunction. This creates a multiplication effect where broken processes generate exponentially more problems.

The pattern is predictable: organizations identify an AI use case, build a prototype that shows promise in controlled conditions, then watch it crumble when exposed to real-world operational chaos. The AI isn't failing; it's revealing the extent of existing process problems that leadership previously ignored or worked around manually.

Tool sprawl compounds this challenge significantly. The median number of SaaS applications grew from eight in 2015 to 80 by 2023, with some organizations running over 200 different tools. Employees now spend 1.8 hours daily navigating between applications, and only 29% report satisfaction with their toolset. Adding AI tools to this environment creates additional complexity rather than solving underlying efficiency problems.

The Deployment Gap

Even when AI projects show initial promise, 87% never transition from prototype to production. Three specific obstacles create this deployment gap:

Service Complexity: Building production-grade machine learning platforms requires integrating multiple technologies, then testing and maintaining them at scale. This process demands substantial resources and time, creating bottlenecks that delay deployment and frustrate development teams.

Data Access and Security Tensions: Organizations face a fundamental tension between data scientists needing comprehensive data access for model accuracy and security teams requiring strict governance protocols. When access policies are misconfigured or governance frameworks are absent, data scientists work with limited, less useful data subsets that compromise model performance.

The Strategic Implications for Leadership

The failure rate has serious strategic implications beyond individual project disappointments. Organizations experiencing repeated AI failures face several cascading problems:

Resource Allocation Inefficiency: Teams spend increasing amounts of time on technology selection and vendor evaluation instead of addressing foundational readiness. This creates opportunity costs where organizational energy goes toward tool comparison rather than capability building.

Talent Retention Challenges: Data scientists and AI practitioners become frustrated working in environments where their projects consistently fail due to organizational limitations beyond their control. High-value technical talent seeks opportunities where their expertise can produce measurable impact.

Competitive Disadvantage: While organizations struggle with AI implementation, competitors who have invested in organizational discipline begin realizing compound advantages from their successful AI deployments. This creates expanding performance gaps that become difficult to close.

The Human-Centered Solution Framework

Moving from the 87% failure group to the 15% success group requires a systematic approach that prioritizes organizational readiness over technological sophistication.

Establish Data Readiness Before AI Investment

Audit Current Data Quality: Conduct comprehensive assessments of data consistency, completeness, and accuracy across all systems that will feed AI applications. Identify specific gaps in formatting, terminology, and governance policies.

Standardize Data Infrastructure: Create unified data standards, establish clear governance frameworks, and implement quality monitoring systems. This foundational work often requires 6-12 months but prevents years of AI project failures.

Build Data Literacy: Ensure that teams understand how data quality impacts AI performance. This includes training non-technical stakeholders on the relationship between data inputs and AI outputs.

Fix Processes Before Adding Technology

Process Audit and Optimization: Document current workflows, identify inefficiencies, and streamline operations before introducing AI tools. AI should enhance efficient processes, not automate broken ones.

Tool Consolidation: Reduce application sprawl by consolidating functionality and eliminating redundant systems. Create clear integration paths for essential tools rather than adding AI as another disconnected solution.

Change Management Protocols: Establish structured approaches for evaluating, implementing, and measuring new technology adoption. This includes clear success criteria and rollback procedures for failed implementations.

Build Governance That Enables Rather Than Constrains

Balanced Access Policies: Create data access frameworks that maintain security while enabling data science teams to work with comprehensive, representative datasets. This requires collaboration between security, legal, and technical teams.

Clear Success Metrics: Define specific, measurable outcomes that connect AI performance to business results. Avoid vanity metrics that measure model development activity rather than organizational impact.

Cross-Functional Collaboration: Establish regular communication channels between technical teams, business stakeholders, and leadership to ensure alignment throughout AI project lifecycles.

The Path Forward

The organizations succeeding with AI aren't using different technology: they're applying different organizational discipline. They recognize that AI success depends more on foundational readiness than on algorithmic sophistication.

This creates a significant opportunity for leaders willing to invest in the unglamorous work of organizational improvement. While competitors chase the latest AI trends, disciplined organizations can build sustainable competitive advantages by focusing on data quality, process optimization, and change management capabilities.

The 87% failure rate isn't inevitable: it's the predictable result of treating AI as a technology problem rather than an organizational capability. Leaders who understand this distinction and act accordingly will find themselves in the 15% of companies where AI delivers transformational results.

Ready to assess your organization's AI readiness and develop a strategic implementation framework? Contact our team to discuss how human-centered solutions can dramatically improve your AI initiative success rates.

Comments