Why 68% of AI Implementations Stall: The Psychological Safety Framework Your Change Plan Is Missing

- saafir.jenkins

- 6 days ago

- 5 min read

Let’s be honest: your AI roadmap looks great on paper. You’ve got the vendor list, the API integrations are mapped out, and the projected ROI has the board nodding in approval. But six months in, the momentum usually hits a wall. The tools are there, but the "Agentic AI" future you promised is sitting idle, or worse, being actively resisted by the very people it was meant to empower.

The hard truth is that while the tech is evolving at breakneck speed, the human brain is still operating on hardware designed for survival. When you introduce AI, your team doesn't see "operational excellence": they see a threat.

At Optimum Human Centered Solutions, we’ve seen this play out repeatedly. Current data suggests that nearly 68% of AI implementations stall out before reaching full production. It isn't because the LLM failed or the data was too messy. It’s because the leadership failed to account for the Complexity Tax: the hidden cost of human friction.

If you want your AI strategy to actually move the needle, you need to stop looking at it as a technical upgrade and start treating it as a psychological transformation.

The Invisible Wall: Why "Standard" Change Management Fails AI

Most leaders use a traditional change management playbook: announce the change, hold a town hall, offer a 30-minute training session, and hope for the best. This works for a new CRM. It does not work for AI.

AI is existential. It challenges an employee’s sense of sovereignty, competence, and job security. When people feel threatened, their cognitive load spikes, and their willingness to experiment plummets. In a state of fear, the brain cannot innovate.

This is where the concept of Psychological Safety moves from a "soft skill" to a "hard requirement" for your P&L. Without a framework that ensures employees feel safe to fail, safe to question, and safe to co-evolve with the machine, your implementation will stall.

The Three Pillars of the AI Psychological Safety Framework

To break through the 68% stall rate, you need a structured approach that prioritizes human-centered business optimization. Here is the framework we use at Optimum HCS to ensure AI adoption actually sticks.

1. Radical Transparency vs. The "Black Box" Approach

Silence is the enemy of adoption. When leadership is vague about why AI is being introduced, employees fill the silence with worst-case scenarios.

The Strategy: Be upfront about what AI can do: and what it cannot do. Frame AI as a "co-pilot" or an "intern" rather than a replacement. Your team needs to know that their human intuition and domain expertise are the guardrails for the technology.

2. The Permission to Fail (The Sandbox)

AI is non-linear. Unlike traditional software, it requires experimentation. If your culture punishes mistakes, your team will never push the boundaries of what the AI can do for your business.

The Strategy: Create a literal "Sandbox" environment where employees are encouraged to break things. Reward the process of experimentation rather than just the immediate output. This lowers the stakes and allows the team to develop the "AI Fluency" necessary for long-term success.

3. Human-Centric Incentives

If AI makes an employee 30% more efficient, and their "reward" is simply 30% more work, they will subconsciously (or consciously) sabotage the implementation.

The Strategy: Align AI success with employee well-being. If the tech saves time, reinvest some of that time into professional development or creative projects the employee actually cares about. Show them how the tech makes their job better, not just faster.

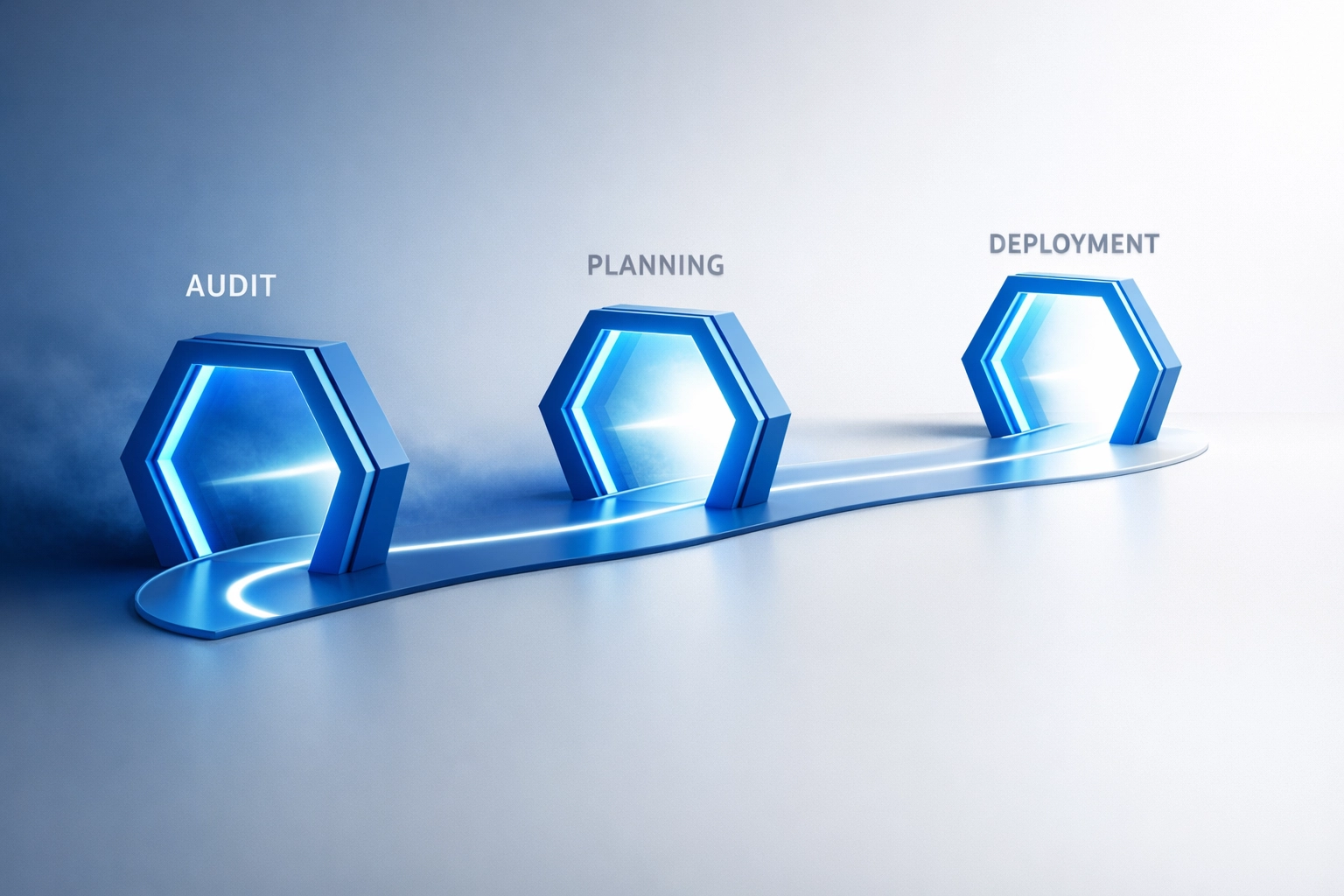

How to Deploy Your Psychological Safety Strategy: A Step-by-Step Guide

If you are ready to turn your AI project from a "stall" into a "success," follow these direct instructions to re-engineer your organizational culture.

Step 1: Audit Your Cultural Readiness

Before you spend another dollar on licensing, you must understand the current emotional temperature of your organization.

Open your internal communications platform (Slack, Teams, or email).

Send a transparent survey asking employees how they feel about AI: anonymously.

Identify the specific fears: is it job loss, loss of autonomy, or just a lack of training?

Review the results to find your "Friction Points."

Step 2: Establish the "Sovereignty" Guardrails

Employees need to feel in control of the tools, not controlled by them.

Click on your project management software and create a new "AI Governance" board.

Add representatives from every department, not just IT.

Define "Human-in-the-loop" protocols. Explicitly state where a human must make the final call.

Publish these guardrails to the entire company to rebuild trust.

Step 3: Launch a "Safe-to-Fail" Pilot

Don't roll AI out to the whole company at once. Start small and stay safe.

Select a low-stakes department (like internal documentation or research).

Set a 30-day "Experimentation Window."

Tell the team: "Your goal is to find 5 ways this tool fails."

Collect the feedback and use it to refine your broader rollout. This turns "critics" into "beta testers."

The ROI of Safety: Why This Matters to the P&L

Leaders often ask: "Is this just making everyone feel good, or does it impact the bottom line?"

The answer is both. A stalled AI implementation is a massive drain on resources. You are paying for software seats that aren't being used, and you're losing the competitive advantage of increased velocity. When you invest in psychological safety, you are essentially reducing your "Complexity Tax."

By removing the human friction, you accelerate the adoption curve. High-trust teams implement changes 2x faster than low-trust teams. In the world of AI, speed isn't just a benefit; it's the only way to stay relevant.

If you're struggling with how to integrate these human elements into your tech stack, check out our guide on AI integration challenges and organizational culture. It breaks down the common mistakes leaders make when they forget the "human" in human-centered solutions.

Moving Forward: From Friction to Fluency

AI implementation isn't a "set it and forget it" task for the IT department. It is a continuous leadership challenge. If your project has stalled, don't look at the code: look at the culture. Are your people safe enough to be great?

At Optimum Human Centered Solutions, we specialize in bridge-building. We help you navigate the gap between cutting-edge technology and the humans who have to use it. Whether you need a full human-centered redesign of your HR operating model or a strategic framework for agentic AI governance, we are here to help.

Actionable Next Steps:

Schedule a 15-minute culture audit with your leadership team this week.

Ask one simple question: "If an employee found a way to automate 50% of their job today, would they feel safe telling us?"

Evaluate the silence that follows.

The future of business isn't just AI: it's AI powered by humans who aren't afraid of it. Let’s build that future together.

Let's Chat! If you’re ready to stop the stall and start the transformation, reach out to us at Optimum Human Centered Solutions. We’ll help you turn your AI roadmap into a reality.

Comments